If your development team has adopted AI coding assistants, workflow automation, or agent frameworks in the past year, there’s a good chance you’re running software built on Anthropic’s Model Context Protocol (MCP). And as of April 2026, there’s a good chance that software is vulnerable to remote code execution — by design.

Security researchers at Ox Security have disclosed what they call a “critical, systemic” architectural flaw baked into the core of every official MCP SDK. The numbers are staggering: over 200 open-source projects, 150 million downloads, 7,000+ publicly accessible servers, and up to 200,000 vulnerable instances worldwide.

What makes this particularly alarming? Anthropic has reportedly characterised the behaviour as “expected.”

TL;DR

- A design flaw in Anthropic’s MCP STDIO transport allows arbitrary OS command execution across all supported SDKs (Python, TypeScript, Java, Rust)

- Over 150 million downloads and 200,000 instances are potentially exposed — including popular tools like Cursor, VS Code, Windsurf, and Claude Code

- Anthropic has declined to patch it, calling the behaviour “expected” rather than a vulnerability

- Every developer building on MCP unknowingly inherits this exposure — it’s an AI supply chain problem at scale

- Mitigation requires sandboxing, input validation, network isolation, and treating all MCP configuration as untrusted input

What Exactly Is the Vulnerability?

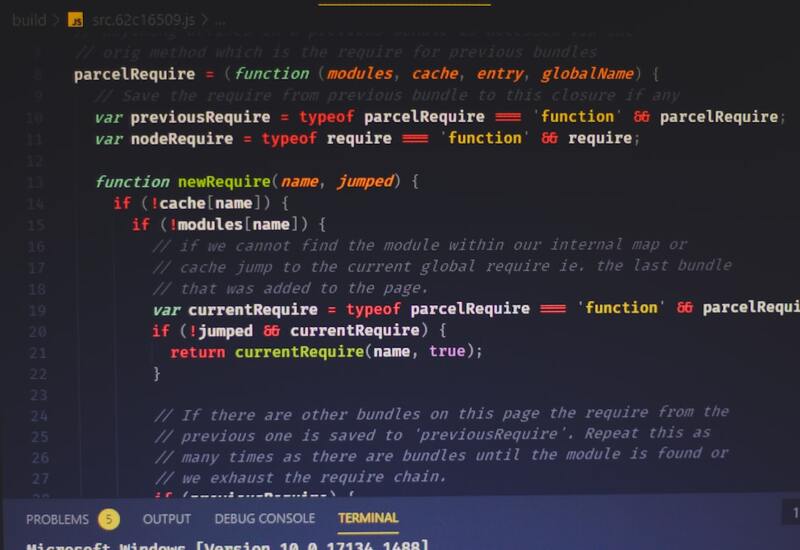

MCP’s STDIO (standard input/output) transport was designed to launch a local server process that communicates with AI agents via stdin and stdout. Simple enough in concept. But the implementation has a critical flaw: the command passed to STDIO is executed regardless of whether the process starts successfully.

In practical terms, if an attacker can influence the MCP server configuration — whether through a malicious MCP server listing, a poisoned configuration file, or a compromised dependency — they can inject arbitrary operating system commands. The command runs, the process errors out, and the attacker has already gained execution on the host.

This isn’t a bug in one SDK or one language binding. It’s an architectural decision replicated across every official implementation: Python, TypeScript, Java, and Rust. Any developer building on the Anthropic MCP foundation unknowingly inherits this exposure.

Why This Is an AI Supply Chain Crisis

The timing couldn’t be worse. MCP has rapidly become the de facto standard for connecting AI agents to external tools and data sources. It’s embedded in the tooling that developers use daily:

- Cursor — the AI-native IDE that’s taken the developer world by storm

- VS Code — via MCP extensions and Copilot integrations

- Windsurf — where exploitation requires zero user interaction (CVE-2026-30615)

- Claude Code — Anthropic’s own CLI agent

- Gemini-CLI — Google’s command-line AI assistant

Beyond IDEs, the vulnerability ripples through the AI agent ecosystem: LangChain, LangFlow, Flowise, LiteLLM, LettaAI, GPT Researcher, and dozens more. These aren’t niche tools — they’re the building blocks of production AI systems across thousands of organisations.

The attack surface is enormous because MCP has become infrastructure. When your infrastructure has a design-level vulnerability, every application built on top of it inherits that weakness.

The “Expected Behaviour” Problem

Perhaps the most concerning aspect of this disclosure is Anthropic’s response. According to Ox Security’s report, Anthropic has repeatedly been asked to patch the vulnerability. Their position? This is “expected behaviour” — not a design flaw requiring correction.

This creates an uncomfortable precedent. When the maintainer of a foundational protocol declines to address a security concern, the burden falls entirely on the downstream ecosystem. Every framework author, every IDE developer, and ultimately every development team using MCP must now implement their own mitigations for a flaw they didn’t create and can’t fix at the source.

It’s reminiscent of other supply chain security debates we’ve seen — the difference being that MCP’s adoption curve has been so steep that the blast radius is already massive.

What Your Team Should Do Right Now

If your organisation uses any MCP-enabled tooling — and in 2026, most development teams do — here’s your action plan:

1. Audit Your MCP Surface

Identify every tool, IDE, and agent framework in your stack that uses MCP. Don’t forget developer workstations — our team regularly finds that the most dangerous attack surfaces are the ones nobody thought to inventory.

2. Sandbox MCP-Enabled Services

Run MCP servers in isolated environments — containers, VMs, or sandboxed processes. The vulnerability requires command execution on the host, so containment is your strongest defence. If you’re already running containerised workloads (and you should be), extend that discipline to your AI tooling.

3. Treat MCP Configuration as Untrusted Input

This is the big one. Never allow external or user-supplied MCP server configurations to be loaded without validation. Implement allowlists for permitted MCP servers. Audit any configuration files that specify STDIO commands.

4. Block Public Network Access

MCP servers should not be accessible from the public internet. Use network segmentation to ensure that even if a command is injected, the blast radius is contained to an isolated network segment.

5. Monitor MCP Tool Invocations

Add observability to your MCP layer. Log every tool invocation, every server spawn, every STDIO command. If something unexpected executes, you want to know about it immediately — not when the incident responders arrive.

6. Install MCP Servers Only from Verified Sources

The MCP ecosystem is still maturing, and not every server listing has been vetted. Treat MCP server installation with the same caution you’d apply to adding a new npm dependency — review the source, check the maintainer, and verify the integrity.

The Bigger Picture: AI Tooling Security Debt

This vulnerability is a symptom of a broader problem we’re seeing across the industry. The rush to adopt AI-powered development tools has outpaced the security scrutiny those tools deserve. Teams that would never ship a web application without a security review are happily installing AI agents with root-level system access and minimal vetting.

At REPTILEHAUS, we’ve been helping teams integrate AI tooling securely — not just making it work, but making it safe. That means treating AI agents as first-class citizens in your security model: identity management, least-privilege access, audit logging, and supply chain verification.

The MCP vulnerability is a wake-up call. The AI supply chain is real, it’s growing fast, and it needs the same rigour we’ve spent decades building into our software supply chains.

What Comes Next

The pressure on Anthropic to address this is mounting. Multiple CVEs have been assigned (including CVE-2025-65720, CVE-2026-30623, CVE-2026-30624, and CVE-2026-30615 among others), and the security community is not letting this one slide. Whether Anthropic changes course or the ecosystem forks around the vulnerability, this is going to shape how we think about AI protocol security for years to come.

In the meantime, don’t wait for upstream fixes. Secure your stack now. If you need help auditing your AI tooling, hardening your MCP configurations, or building a security-first AI integration strategy, get in touch with our team — this is exactly the kind of challenge we specialise in.

📷 Photo by Sasun Bughdaryan (@sasun1990) on Unsplash