For over a decade, the browser was a window to the web. Now it is the workplace itself. Design tools, code editors, CRMs, admin panels, financial dashboards — entire business operations run inside browser tabs. But the security model that governs those tabs was designed for a simpler era, one where a single human sat behind the keyboard and every action in a session could be confidently attributed to that person.

That assumption is quietly falling apart. AI co-pilots, browser extensions, pasted scripts, and in-tab agents now share the same authenticated sessions, the same cookies, and the same tokens as the human user. The browser’s trust boundary has not kept pace with what actually happens inside it.

TL;DR

- The browser has become the primary workplace, but its session security model still assumes a single human actor per session.

- AI co-pilots, extensions, and in-tab agents share authenticated tokens and cookies, creating an attribution crisis — you cannot tell who (or what) performed an action.

- Traditional session management (cookies, JWTs, OAuth tokens) offers no mechanism to distinguish between human-initiated and agent-initiated requests.

- Zero-trust principles need to extend inside the browser: per-action verification, scoped tokens, and agent-aware audit trails are becoming essential.

- Development teams should start implementing session segmentation, least-privilege token scoping, and behavioural anomaly detection now — before regulators mandate it.

The Session Attribution Problem

When you sign into a SaaS application, your browser receives an authentication token — typically a cookie or a JWT. Every subsequent request carries that token, and the server trusts it implicitly. The mental model is straightforward: one user, one session, one set of permissions.

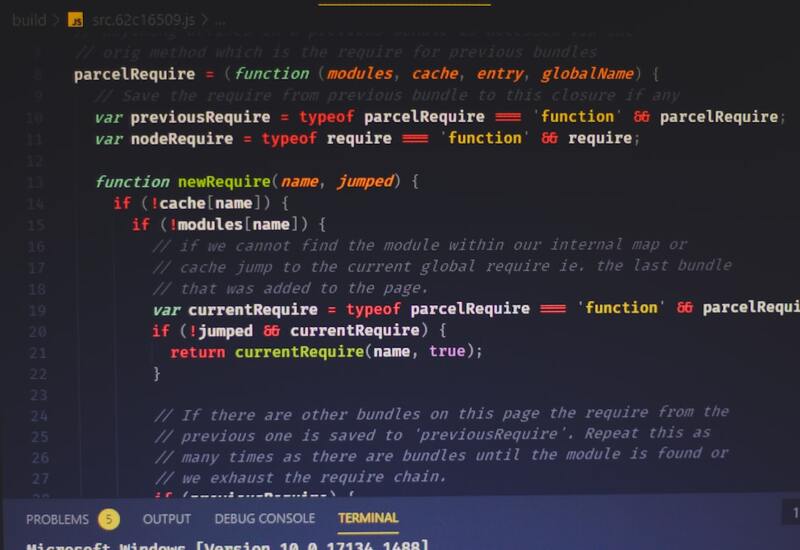

But consider what actually shares that session context today. A grammar-checking extension reads and modifies form fields. An AI co-pilot extension has DOM access to suggest code or summarise documents. A customer support widget injects scripts. A browser-based coding environment runs AI agents that make API calls on your behalf. All of these actors operate within your authenticated session, using your tokens, with your permissions.

From the server’s perspective, every one of those requests looks identical to a request you made yourself. There is no standard mechanism in HTTP, OAuth, or session cookie specifications to distinguish between “the human clicked a button” and “an AI extension fired an API call using the human’s token.”

This is not a theoretical problem. The recent analysis from The Hacker News puts it plainly: the definition of “actor” has changed, but identity systems have not caught up.

Why Traditional Security Falls Short

Most web application security assumes a trust-once-verify-later approach. You authenticate at the start of a session, and subsequent requests ride on that initial trust. Rate limiting, CSRF tokens, and Content Security Policy (CSP) are all useful, but none of them address the fundamental question: which entity within the browser initiated this request?

Consider three common security mechanisms and their blind spots:

CSRF tokens protect against cross-origin attacks but do nothing to prevent a same-origin extension or in-page script from using the token. If the agent is inside the page, it has the CSRF token.

Content Security Policy controls which scripts can load, but browser extensions operate outside CSP restrictions by design. A malicious or compromised extension bypasses these protections entirely.

OAuth scopes define what an application can do, but not what an in-session actor can do. A token scoped to “read and write documents” gives that permission to everything sharing the session — the human, the AI co-pilot, and the compromised extension alike.

The AI Co-Pilot Amplifier

AI co-pilots have made this problem dramatically worse, not because they are inherently insecure, but because they require broad access to be useful. A coding co-pilot needs to read your files, understand your context, and make suggestions. A writing assistant needs access to your document content. An email co-pilot reads your inbox.

Each of these tools operates with the full permissions of the authenticated user. And unlike a human, an AI agent can perform hundreds of actions per minute, exfiltrate data at machine speed, or make subtle modifications that would take a human reviewer hours to spot.

The attack surface is not just the AI tool itself — it is the entire ecosystem of extensions and scripts that can interact with the AI tool’s DOM presence, intercept its API calls, or inject prompts into its context. Prompt injection attacks become session hijacking attacks when the AI co-pilot has your authentication tokens.

What Development Teams Should Do Now

Waiting for browser vendors or standards bodies to solve this is not a strategy. Development teams building SaaS applications and internal tools need to start hardening their session models today. Here is a practical starting point:

1. Implement Session Segmentation

Rather than a single all-powerful session token, issue scoped tokens for different operations. Administrative actions should require a separate, short-lived token that cannot be silently reused by an extension or agent. This is the principle of transaction-level authentication — the same idea behind requiring your password again before changing your email address, extended to every sensitive operation.

2. Adopt Per-Action Verification for Sensitive Operations

Critical actions (data exports, permission changes, financial transactions) should require explicit verification that goes beyond possessing a session token. Step-up authentication, device-bound keys, or WebAuthn challenges for high-risk operations create friction that is tolerable for humans but blocks automated abuse.

3. Build Agent-Aware Audit Trails

Your logging should capture not just what happened and which user’s token was used, but how the request was initiated. User-Agent strings, request timing patterns, referrer headers, and interaction metadata (mouse events, keyboard input preceding the action) can help distinguish human-initiated from agent-initiated requests. This is not foolproof, but it gives your security team something to work with during incident response.

4. Apply Least-Privilege Token Scoping

If your application exposes APIs, ensure that tokens can be scoped to the minimum permissions required. Personal access tokens, API keys, and OAuth grants should all support granular scoping. When an AI co-pilot only needs read access to documents, it should not have a token that also allows account administration.

5. Deploy Behavioural Anomaly Detection

Machine-speed access patterns look different from human ones. A user who reads three documents per minute and suddenly starts exporting fifty per second is likely not acting manually. Behavioural baselines, combined with real-time anomaly detection, can flag compromised sessions before significant damage occurs.

The Zero-Trust Browser

The industry has spent five years adopting zero-trust networking — the idea that no device or network should be implicitly trusted. The same philosophy now needs to extend inside the browser. Every action, regardless of session state, should be evaluated in context: who initiated it, what permissions does it genuinely require, and does it match expected behaviour?

Browser vendors are beginning to respond. Chrome’s upcoming isolated web app proposals and Safari’s tightened extension permissions in recent releases are steps in the right direction. But application developers cannot wait for these changes to reach general availability. The threat is here now, and the mitigations are within your control.

Where This Is Heading

Regulators are paying attention. The EU’s Digital Operational Resilience Act (DORA) already requires financial entities to demonstrate comprehensive session monitoring. The EU AI Act’s transparency requirements will eventually demand that AI agents acting within authenticated sessions be identifiable and auditable. Organisations that build session segmentation and agent-aware security now will have a significant head start when these requirements become enforceable.

At REPTILEHAUS, we work with teams building SaaS platforms, internal tools, and AI-integrated applications where session security is not optional. Whether you need to harden your authentication architecture, implement zero-trust session models, or build audit systems that can distinguish humans from AI agents, our team has the expertise to help. Get in touch — this is one area where acting early pays dividends.