AI agents are no longer experimental. They book meetings, write code, manage infrastructure, and even make purchasing decisions. But here is the uncomfortable truth most teams are ignoring: the more capable your agent becomes, the less your users trust it — unless you design transparency into every critical interaction.

The problem is not capability. It is opacity. When an agent acts on a user’s behalf and they cannot see why it made a particular choice, trust degrades silently. And once trust is gone, adoption collapses — no matter how impressive the demo.

TL;DR

- AI agents that act without explaining themselves erode user trust — even when they get the right answer

- Transparency moments are deliberate UX interventions where agents surface their reasoning, confidence, and intended actions before proceeding

- Five core patterns — action previews, confidence indicators, reasoning traces, checkpoint confirmations, and audit trails — solve most transparency challenges

- The EU AI Act mandates transparency for high-risk AI systems, making this a compliance concern as well as a UX one

- Teams that design for trust now will have a significant adoption advantage over those bolting transparency on later

The Trust Gap in Agentic AI

There is a growing body of evidence that users distrust AI systems that operate as black boxes. A recent Hacker News discussion about LLMs corrupting documents when delegated to highlighted a fundamental anxiety: people do not know what the agent actually did until the damage is visible.

This is not a fringe concern. Every team building agentic features — from customer support bots to autonomous DevOps pipelines — faces the same challenge. The agent might be correct 98% of the time, but the 2% where it silently makes the wrong call is what users remember.

Traditional software has an implicit contract: the user clicks a button, the software does what the button says. Agents break this contract. They interpret intent, make decisions, and take actions — often across multiple systems — with varying degrees of autonomy. Without explicit transparency mechanisms, users are left wondering: what did it actually do, and why?

What Are Transparency Moments?

A transparency moment is a deliberate point in an agent’s workflow where it surfaces its reasoning, intended actions, or confidence level to the user before proceeding. It is the UX equivalent of “showing your working” — not to slow the agent down, but to keep the human in the loop at the moments that matter.

The key word is deliberate. You do not need to explain every micro-decision. That would be exhausting and counterproductive. Instead, you identify the critical junctures — the points where the agent’s action is irreversible, high-stakes, or ambiguous — and design transparency into those specific moments.

Think of it like a pilot and autopilot. The autopilot handles routine flight. But before landing, before changing altitude in turbulence, before any high-consequence manoeuvre — the system communicates clearly with the pilot. Agentic AI needs the same discipline.

Five Patterns That Build Trust

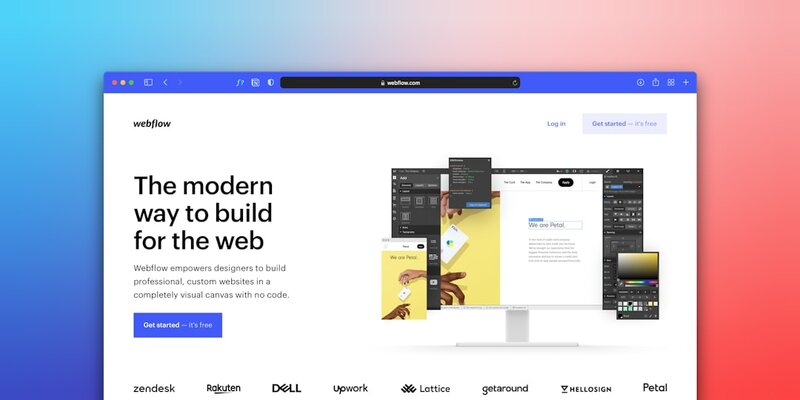

1. Action Previews

Before an agent executes a consequential action, show the user exactly what it intends to do. Not a vague summary — the actual action, with specifics.

Bad: “I’ll update your settings.”

Good: “I’m about to change your billing plan from Pro (€49/month) to Enterprise (€149/month), effective immediately. This will also enable SSO and increase your seat limit from 10 to 50. Shall I proceed?”

Action previews are especially critical for operations that touch payments, permissions, data deletion, or external communications. The preview should include enough detail that the user can make an informed decision without needing to investigate independently.

2. Confidence Indicators

Not all agent decisions carry equal certainty. Surfacing confidence levels helps users calibrate their own oversight. This does not mean showing a raw probability score — most users will not know what to do with “87% confident”. Instead, use contextual language and visual cues.

For instance: a code review agent might flag issues as “definite bug” (with a clear explanation), “likely issue” (with a suggestion to review), or “style preference” (with a note that this is subjective). Each level carries a different implied action from the user.

3. Reasoning Traces

When an agent makes a non-obvious decision, provide an expandable reasoning trace. This is not a full chain-of-thought dump — it is a curated summary of the key factors that led to the decision.

A deployment agent that rolls back a release should explain: “Rolled back v2.3.1 because error rate exceeded 5% threshold (currently 7.2%) within 10 minutes of deployment. Affected endpoint: /api/checkout. Previous stable version v2.3.0 restored.” The user did not ask for the rollback, but they can immediately understand and verify the reasoning.

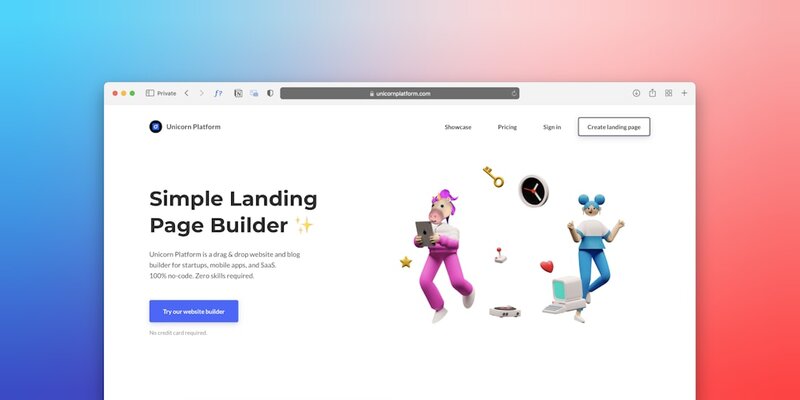

4. Checkpoint Confirmations

For multi-step workflows, insert confirmation checkpoints at natural boundaries. This is particularly important for agents that chain multiple actions together — booking a flight, then a hotel, then a car, for example.

Each checkpoint should summarise what has been completed, what is about to happen, and give the user a clear option to pause, modify, or cancel. The agent should not assume that approval of step one implies approval of step three.

5. Audit Trails

Every agent action should be logged in a human-readable audit trail accessible to the user. This is not just for compliance — it is a trust-building mechanism. When users know they can review what an agent did after the fact, they are more comfortable granting it autonomy in the first place.

Good audit trails include: timestamps, the action taken, the reasoning summary, the user who authorised it (or whether it was autonomous), and the outcome. Make these searchable and filterable — a wall of text helps no one.

The Compliance Dimension

This is not purely a UX discussion. The EU AI Act, with its high-risk system provisions taking effect through 2026, explicitly requires transparency obligations for AI systems. Article 13 mandates that high-risk AI systems be designed to be “sufficiently transparent to enable users to interpret the system’s output and use it appropriately.”

If your agent makes decisions that affect people’s access to services, financial outcomes, or employment — transparency is not optional. It is a legal requirement. Teams that build transparency in from the start will avoid painful retrofitting when compliance audits arrive.

Common Mistakes to Avoid

Over-explaining everything. If every action triggers a confirmation dialog, users will develop “approval fatigue” and start clicking “yes” without reading. Reserve transparency moments for genuinely consequential actions.

Technical jargon in explanations. Reasoning traces written for engineers are useless for end users. Tailor the language to your audience. A finance team does not need to know about token counts — they need to know why the agent categorised an expense differently.

Transparency as an afterthought. Bolting an “explain” button onto an opaque system does not build trust. Transparency needs to be part of the interaction design from day one, not a feature you add after users complain.

Hiding uncertainty. Agents that present every output with equal confidence are lying by omission. If the agent is uncertain, say so. Users respect honesty far more than false confidence.

Practical Implementation

If you are building agentic features today, here is a starting framework:

- Map your agent’s decision points. List every action your agent can take autonomously. Categorise them by reversibility and impact.

- Assign transparency levels. Low-impact, reversible actions (sorting a list, summarising text) can run silently. High-impact or irreversible actions (sending emails, modifying data, making purchases) need explicit transparency moments.

- Design the disclosure format. For each transparency moment, decide: action preview, confidence indicator, reasoning trace, or checkpoint confirmation? Usually one or two patterns suffice per interaction.

- Build the audit layer. Log everything from day one. It is vastly easier to build this into the architecture than to retrofit it.

- Test with real users. Watch where they hesitate, where they blindly approve, and where they lose confidence. Adjust your transparency moments accordingly.

Where This Is Heading

As agents become more autonomous — managing infrastructure, handling customer interactions, writing and deploying code — the transparency challenge will only intensify. The teams that solve this well will build products people actually trust enough to use. The teams that ignore it will build impressive demos that never achieve real adoption.

At REPTILEHAUS, we have been designing and building agentic systems for clients across Dublin and beyond — from AI-powered customer service platforms to autonomous DevOps pipelines. The transparency layer is never an afterthought in our builds. If you are planning an AI agent project and want to get the trust equation right from the start, get in touch.