If your development team has spent the past eighteen months assembling an AI toolkit piece by piece — a code assistant here, a security scanner there, an agent orchestrator bolted on top — you are not alone. But in May 2026, the landscape is shifting fast. The era of stitching together standalone AI tools is ending. In its place, integrated enterprise AI development stacks are emerging, and the implications for how your team builds, secures, and ships software are significant.

TL;DR

- The AI development toolchain is consolidating from standalone tools into integrated enterprise stacks — Snyk+Claude, Opsera+Cursor, and Coder Agents all launched or partnered in the past fortnight.

- Security is becoming embedded in the AI coding loop rather than bolted on after the fact, with Snyk integrating Anthropic’s Claude directly into vulnerability detection workflows.

- Self-hosted AI agent infrastructure (Coder Agents) is giving enterprises the control they need to run agentic development at scale without sending code to third-party clouds.

- Teams that delay consolidation risk tool sprawl, inconsistent governance, and mounting integration tax — a hidden cost that compounds with every disconnected AI tool added.

- The smart play is to evaluate your AI dev stack as a unified platform decision, not a series of individual tool choices.

Three Announcements That Signal the Shift

In the space of a single week in May 2026, three announcements crystallised a trend that has been building for months.

Snyk + Claude: Security Meets the AI Coding Loop

Snyk’s integration of Anthropic’s Claude models into its AI Security Platform marks a fundamental change in how security fits into AI-assisted development. Rather than scanning code after it has been written — the traditional SAST/DAST approach — Snyk is embedding Claude directly into the vulnerability detection workflow. The result is security analysis that understands context, not just pattern matching.

This matters because AI-generated code has a well-documented blind spot problem. As we covered in our piece on security blind spots in AI-generated code, AI assistants tend to produce code that looks correct but harbours subtle vulnerabilities — broken access control, insecure defaults, dependency hallucinations. Embedding a security-aware LLM directly in the scanning pipeline closes the feedback loop. The AI that helps you write code and the AI that audits it are now part of the same system.

Opsera + Cursor: Guardrails Inside the IDE

Opsera’s partnership with Cursor takes a different but complementary angle. Opsera’s DevSecOps agents now embed directly inside Cursor’s AI-powered IDE, enforcing enterprise-grade security, compliance, and architectural guardrails at the point of code generation — not in a separate CI/CD check twenty minutes later.

This is the logical next step from what we discussed in our post on DevSecOps in 2026. If security belongs in the pipeline, it belongs even earlier — in the editor, at the moment code is being generated. The Opsera-Cursor integration means that when an AI agent scaffolds a feature, the guardrails are already in place. No context switching. No “we’ll fix it in review.”

Coder Agents: Self-Hosted AI at Enterprise Scale

Perhaps the most architecturally significant announcement is Coder’s launch of Coder Agents in beta. This gives enterprises the ability to run AI-driven developer workflows entirely on self-hosted infrastructure, using any AI model they choose.

For teams in regulated industries — finance, healthcare, defence — sending proprietary code to a third-party AI service has always been a non-starter. Coder Agents solves this by bringing the agent runtime in-house. Your code stays on your infrastructure. Your models run on your GPUs. Your governance policies apply natively.

Why This Consolidation Was Inevitable

The fragmented AI dev tool landscape was never sustainable. Consider what a typical mid-sized development team’s AI toolkit looked like at the start of 2026:

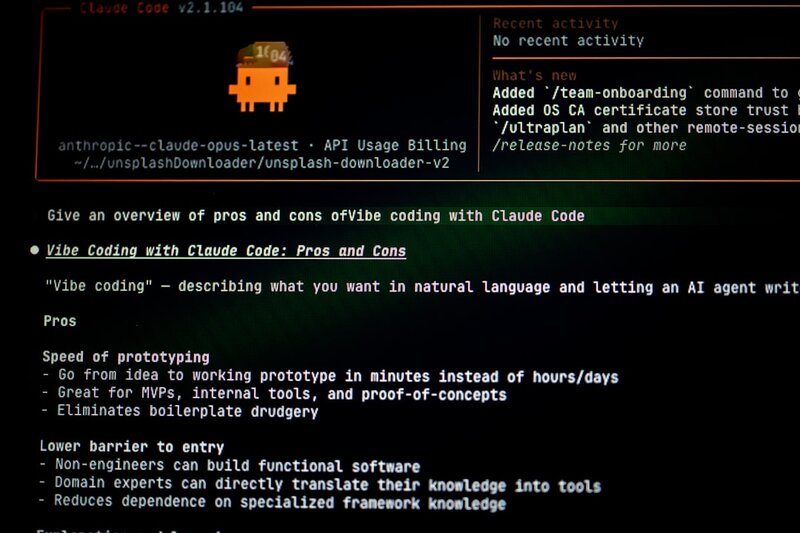

- A code assistant (Copilot, Cursor, or Claude Code)

- A separate security scanner (Snyk, Semgrep, or SonarQube)

- An agent orchestrator (LangChain, CrewAI, or custom)

- A CI/CD pipeline with AI-enhanced testing bolted on

- An observability layer trying to make sense of it all

Each tool has its own authentication model, its own context window, its own understanding of your codebase. None of them talk to each other natively. The result? Integration tax — the hidden cost of making your tools work together, keeping them in sync, and ensuring consistent governance across all of them.

As we explored in our post on agent sprawl, ungoverned AI tools multiply silently. A developer installs an extension. A team lead spins up an agent. Before long, you have a dozen AI touchpoints with no unified policy on what they can access, what they can modify, or how their outputs are audited.

Consolidation is not about vendor lock-in. It is about reducing the surface area your team needs to govern.

What the Integrated Stack Looks Like

The emerging pattern is clear. The AI development stack of late 2026 will likely have three tightly coupled layers:

1. The Intelligent IDE Layer

Code generation, refactoring, and architectural suggestions — with security and compliance guardrails baked in, not bolted on. The Opsera-Cursor model is the template here.

2. The Security and Quality Layer

AI-powered analysis that understands the full context of your application, not just individual files. Snyk+Claude represents this — vulnerability detection that reasons about intent, not just syntax.

3. The Agent Infrastructure Layer

The runtime environment where AI agents execute development tasks autonomously — testing, deployment, documentation, dependency updates. Coder Agents and NVIDIA’s Agent Toolkit (announced at GTC with Adobe, Atlassian, Salesforce, SAP, ServiceNow, and Siemens as launch partners) are competing to own this layer.

What Your Team Should Do Now

You do not need to rip and replace your entire toolchain tomorrow. But you should be making decisions with consolidation in mind.

Audit Your Current AI Tool Surface

Map every AI tool your team uses — sanctioned or not. Count the integration points. Identify where context is lost between tools. This audit alone often reveals surprising sprawl.

Evaluate Platforms, Not Point Solutions

The next time you add an AI capability, ask whether the platform you are already using offers it natively. A slightly less polished integrated feature often beats a best-in-class standalone tool that creates yet another integration boundary.

Prioritise Self-Hosted Options for Sensitive Code

If your codebase contains proprietary algorithms, customer data, or regulated information, look seriously at self-hosted agent infrastructure like Coder Agents. The cloud-first default served us well for a decade, but AI agents with deep codebase access change the calculus.

Demand Unified Governance

Whatever stack you choose, insist on a single pane of glass for AI governance — who can use which tools, what they can access, and how their outputs are audited. If your vendor cannot provide this, your team will end up building it themselves, and that is never where you want your engineering hours going.

The Bigger Picture

Git pushes increased 78% year-on-year globally in Q1 2026, according to Microsoft’s latest data. Software developer employment is up 4% over the same period. The code is flowing faster than ever, and AI is the accelerant.

But velocity without governance is just organised chaos. The consolidation of the AI development stack is not a trend to watch from the sidelines — it is the infrastructure decision that will determine whether your team’s AI-accelerated output is an asset or a liability.

The teams that get this right will ship faster, more securely, and with fewer integration headaches. The teams that do not will drown in tool sprawl, context fragmentation, and governance gaps that widen with every sprint.

Need help evaluating your AI development stack or building a governance framework that scales? At REPTILEHAUS, we help development teams navigate exactly these decisions — from toolchain architecture to security integration to self-hosted AI infrastructure. Get in touch.

📷 Photo by Bernd 📷 Dittrich on Unsplash